Since artificial intelligence (AI) has been developing at an overwhelming pace, humans may face an era when they cannot distinguish between what is true and what is not. Not so long ago, a video that showed Barack Obama, former President of the United States (US), denouncing President Donald Trump was released online. Although the face and the voice were just like Obama, it turned out that the video was, in fact, a fake video to warn about the serious future that “deepfakes” might cause. Therefore, the Sungkyun Times (SKT) introduces what deepfakes are, the problems they can cause, and how mankind needs to deal with them.

What Are Deepfakes?

Definition and Origin of Deepfakes

Deepfakes, unlike face swapping on applications such as SNOW, are AI-powered images or videos of one person with the face of another. In December, 2017, a user named “DeepFakes” posted explicit videos of famous celebrities on a website called Reddit. Since then, “deepfakes” has become a word that refers to such videos and pictures. In addition to this, in January, 2018, a free application called FakeApp was launched for the first time, which enabled users to easily create deepfakes. Because it is so easy to make deepfakes, FakeApp drew a lot of attention from around the world and was downloaded more than 120,000 times in the 1st half of this year.

How Do Deepfakes Work?

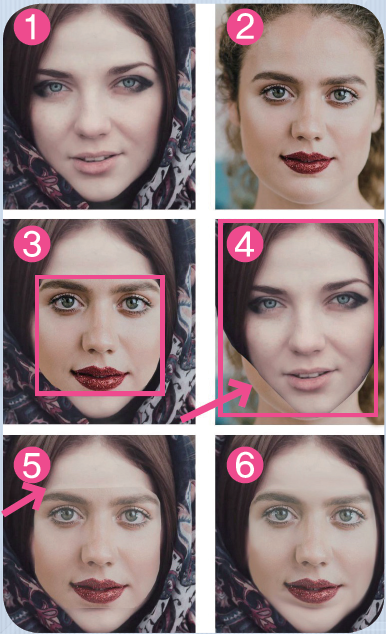

The principle of deepfakes is quite simple. Deepfakes are based on a form of AI known as “deep learning,” which is a method that gathers hundreds or thousands of photos of a targeted person and compares them with a face in the source videos. For instance, in order to transfer the face of person A to the one of person B in a sample video, anyone with thousands of images of A can just put them into an algorithm and wait until the face of B becomes like A’s. Voices can be faked with the same principle: feeding hundreds of recordings of the targeted person to make the same voice of that person. For now, it looks unnatural when the person in the source video blinks or makes odd facial expressions. Using FakeApp, however, anyone who understands a suite of free machine learning tools called TensorFlow by Google and has a powerful personal computer (PC) can create quite believable videos.

Problems That Deepfakes Might Cause

1. Easier Production of Fake Pornography

The biggest problem that deepfakes may bring about is that they make producing fake pornography much easier. It is such a huge issue, in fact, that sometimes the word “deepfakes” refers to manipulating pornography by using deepfakes. It is not only famous Hollywood actors but also some K-pop singers that have become victims of manipulated videos. Even worse though, is now that a lot of people casually upload their selfies online, anyone can be a potential victim of deepfake pornography. In other words, deepfakes can be abused as a new type of digital sexual crime. Digital sexual crime includes distributing sexually explicit pictures or videos without the consent of the person in them and is usually committed by an expartner out of spite. Since anyone can fabricate videos with only a photoset of a targeted person by using deepfake technology, it is much more difficult for victims to find out who distributed deepfake pornography in the first place. Moreover, normal people cannot easily notice it, even if deepfakes with their faces in them were being spread, because the police have found it difficult to distinguish deepfakes and real videos by just monitoring the web.

2. Political Abuse

Even though deepfakes first targeted Hollywood stars, they may aim more and more at politicians in the near future. Indeed, there was a deepfake video in which German Chancellor Angela Merkel makes a Euro-centric remark with her face blended onto Donald Trump’s. Even though the video was made just for fun, this shows that deepfakes could be used for political propaganda by spreading distorted information. Another example of this is where Marco Rubio, a senator from Florida in the US, warned that deepfakes might pose an international threat if videos like a US leader or an official from North Korea or Iran, warning of an impending disaster, were recorded. In the meantime, domestically, when there is a nationwide election, there is a possibility that a candidate could spread deepfakes of a rival candidate to manipulate public opinion. Indeed, lawmakers and intelligence officials warn that deepfakes may be abused during the upcoming US midterm elections in November. Namely, elections may no longer be fair and transparent if deepfakes hinder the public from distinguishing what is true and what is not. Moreover, when a politician’s fake remarks used in a deepfake touches on sensitive issues such as religion, race, and minorities, it can cause confusion throughout society.

Possible Solutions to Handle Deepfakes

1. Stricter Regulations and Standards

In Korea, if a person produces deepfake pornography to spread online, he or she can be accused of an invasion of privacy, cyber defamation, or a violation of criminal law. Nevertheless, punishments for making and distributing deepfake pornography are too soft at the moment; deepfake pornography is not included in the Sexual Violence Prevention and Victims Protection Act in Korea. Therefore, those who make and distribute deepfake pornography are fined less than 5 million or given a sentence of up to a year. There should be punishments not only for deepfake pornography but also for any kinds of deepfakes that cause personal and social issues. As a possible approach, “false light” could be introduced instead of defamation. Instead of defamation which requires a victim’s lowered reputation in the community as a precondition, “false light” can protect the victim in any situations where he or she is emotionally hurt. Therefore, if the current concept of defamation is compromised with “false light,” ordinary people whose standards for judging reputation in society is vague would be properly protected by law.

2. Efforts Needed by the Government and Society

According to global research company Gartner, people will consume more AI-generated false information than true information by 2022, which means the range of influence of deepfakes has global implications. Therefore, the government needs to work on distinguishing and detecting the abuse of deepfakes. Indeed, the US Defense Department has produced the tools for catching deepfakes through the Media Forensics (MediaFor) program run by US Defense Advanced Research Projects Agency (DARPA). In Korea, the Ministry of Science and ICT (MSIT) also held the “AI Research and Development (R&D) Challenge” this year, where participants could develop AI to automatically detect deepfakes. Companies and online communities also need to work on overcoming the harmful effects of deepfakes. First of all, companies need to establish new ethical standards regarding AI. For instance, the Microsoft Corporation has introduced AI ethics guidelines, which mentions that AI needs to be developed for the better, not for the worse. Secondly, online communities, which are hotbeds for deepfake content, must actively monitor deepfakes. An image hosting site Gfycat has removed posts that were confirmed to be deepfakes and banned users who uploaded those posts.

3. Media Literacy: An Educational Approach

Last but not least, civic groups and schools should educate individuals to generalize media literacy in the long term. According to a report by the Korea Creative Content Agency (KOCCA), media literacy stands for the ability to understand the messages themselves that information conveys rather than its structure or technology. Like MediaSmarts in Canada, a civic group conducts education programs for parents and teachers regarding media issues, civic groups need to put an effort into educating the public. In other words, it is important that people check the source of information and judge if the person in the video sounds far-fetched or not when watching videos.

Just as filmmakers recreated the actor, Paul Walker, who had been killed in a car crash before he finished shooting the movie Furious 7, deepfakes can be positively used in the computer graphics industry. Nevertheless, it has become true that deepfakes may make it harder for people to distinguish what is true and what is not. In order to overcome problems that deepfakes may cause, it is time that not only the government but all other areas of society take measures to face the near future with deepfakes.